Chapters

On this page

Introduction

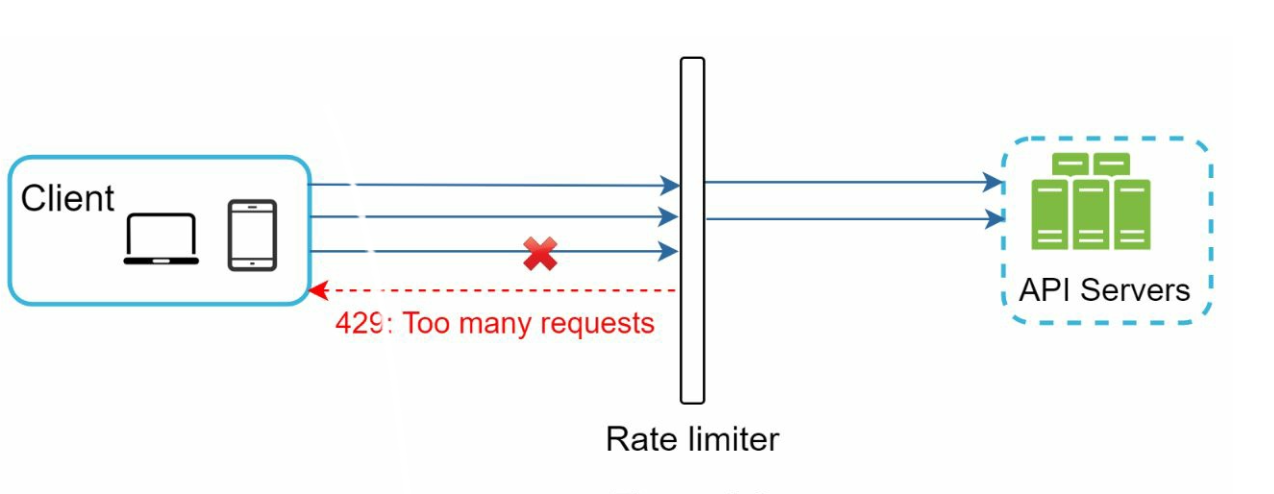

This chapter explores the design and implementation of a rate limiter—a system component used to control traffic rates sent by clients or services. Rate limiters are crucial for preventing abuse, reducing costs, and ensuring the stability of server resources. Examples of their use include limiting posts, account creations, and reward claims.

Benefits of Rate Limiting

- Preventing DoS Attacks: Blocking excess calls to avoid resource starvation.

- Cost Reduction: Limiting unnecessary requests to reduce server expenses.

- Preventing Overloads: Filtering out excessive requests to stabilize server performance.

Step 1: Understanding the Problem

Key Features

- Server-side API rate limiter.

- Support for multiple throttle rules.

- Handle large-scale systems in distributed environments.

- Option for a standalone service or application-level code.

- Inform users when throttled.

Requirements

- Accurate request throttling.

- Minimal latency.

- Low memory usage.

- Distributed capability.

- Clear exception handling.

- High fault tolerance.

Step 2: High-Level Design

Placement Options

- Client-Side Implementation: Unreliable due to potential misuse.

- Server-Side Implementation: Preferred for control and reliability.

- Middleware (API Gateway): A flexible option for integrated rate limiting.

Guidelines for Placement

- Evaluate current tech stack and choose efficient options.

- Select appropriate algorithms based on business needs.

- Use an API gateway if microservices are employed.

- Opt for commercial solutions if resources are limited.

Step 3: Rate Limiting Algorithms

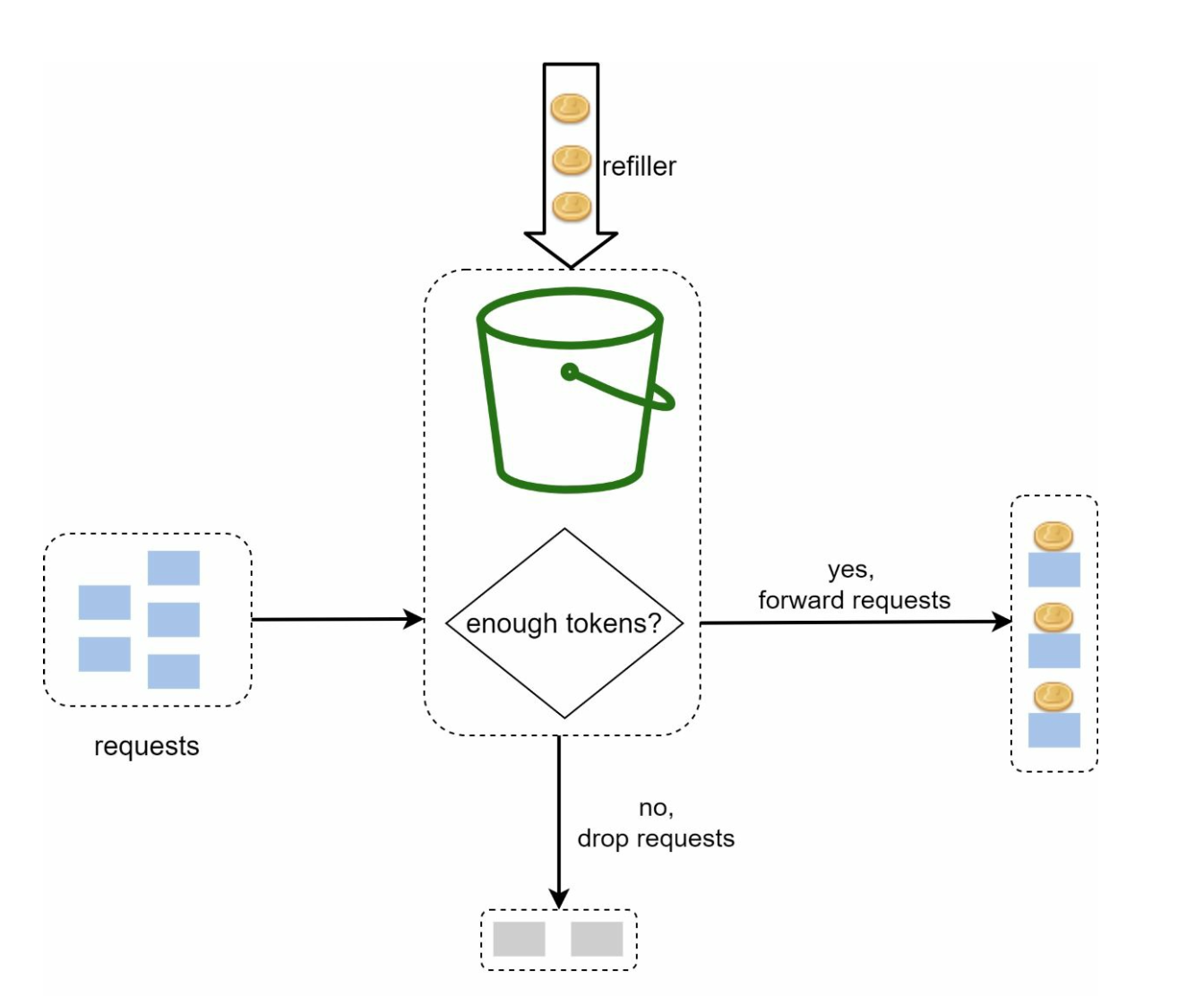

1. Token Bucket

- Description: Tokens are added to a bucket at a fixed rate; each request consumes a token.

- Parameters: Bucket size and refill rate.

- Pros: Easy to implement, memory-efficient, supports traffic bursts.

- Cons: Requires careful parameter tuning.

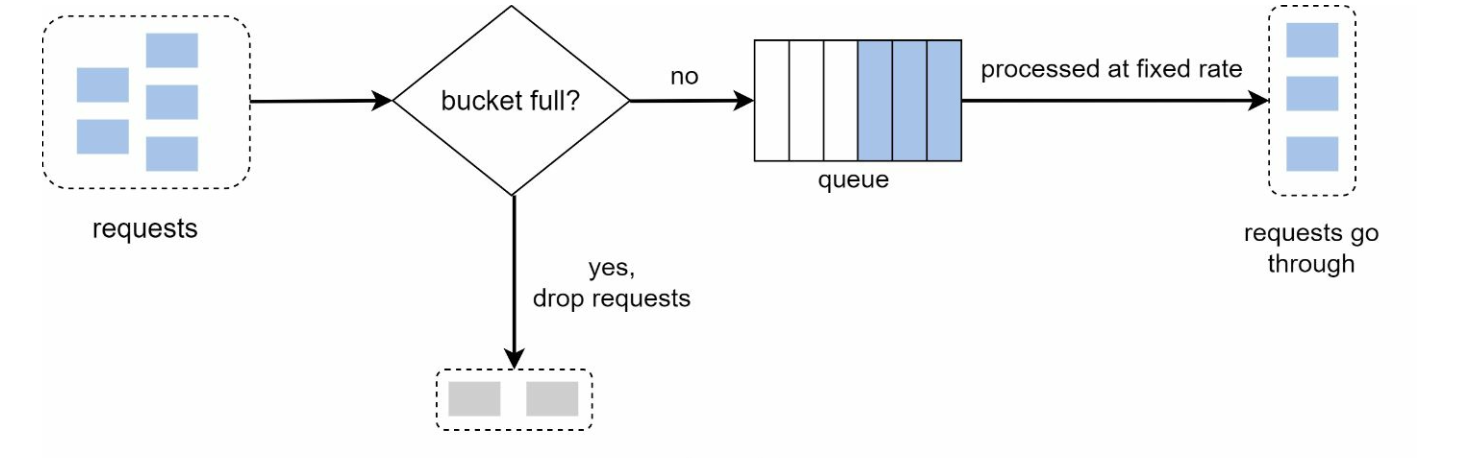

2. Leaking Bucket

-

Description: Processes requests at a fixed rate using a FIFO queue.

-

Pros: Memory-efficient, stable outflow rate.

-

Cons: Traffic bursts may delay recent requests.

Example: https://github.com/uber-go/ratelimit

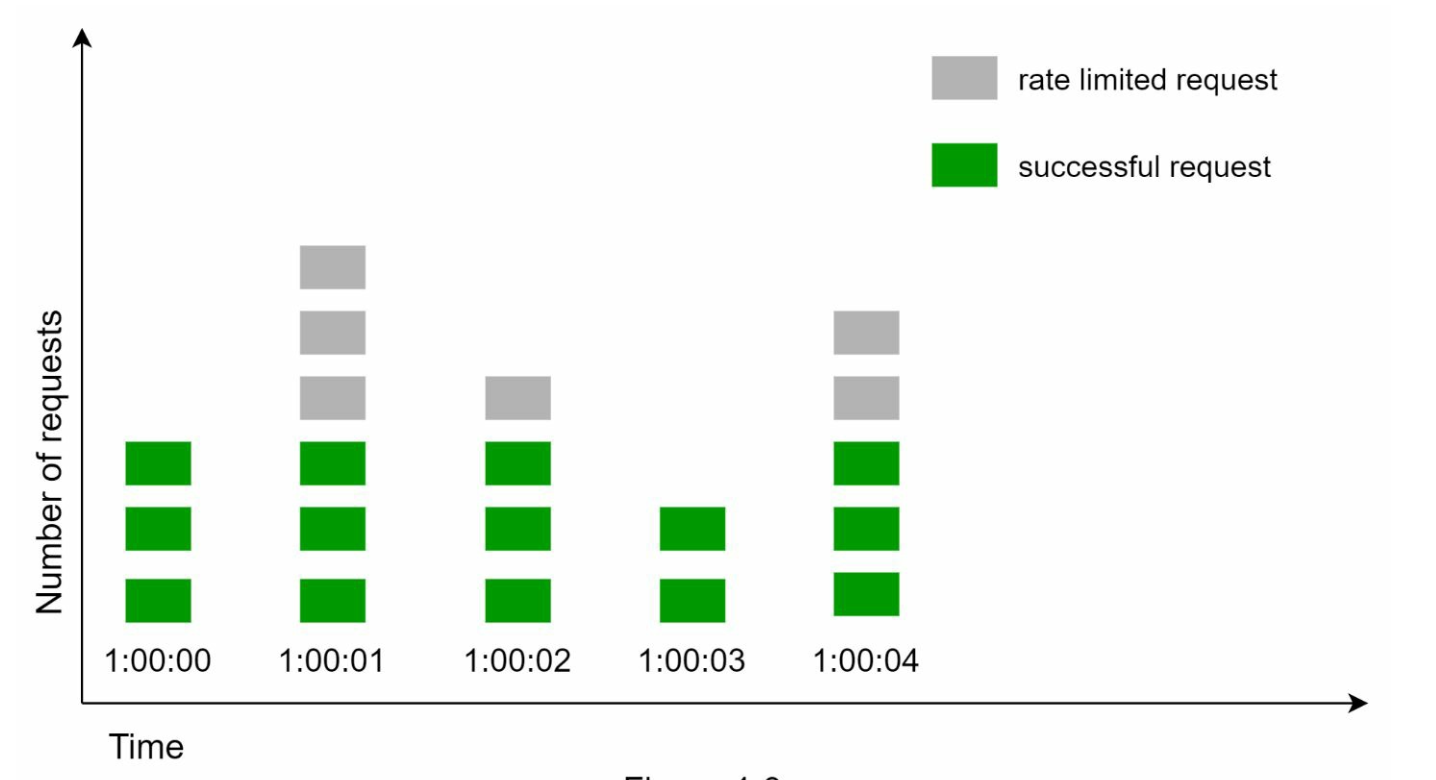

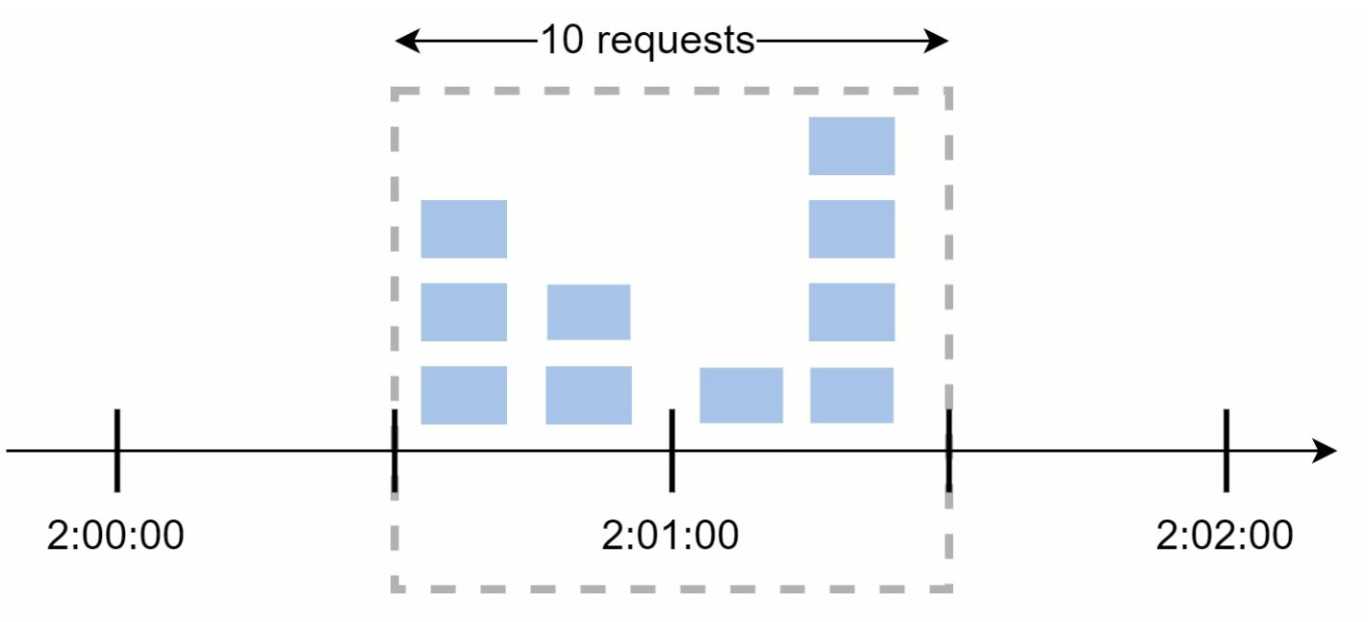

3. Fixed Window Counter

-

Description: Divides time into fixed intervals and uses counters to limit requests.

-

Pros: Simple, efficient for specific use cases.

-

Cons: Traffic spikes at window edges can exceed limits.

-

Sudden burst of traffic at the edges of time windows could cause more requests than allowed quota to go through.

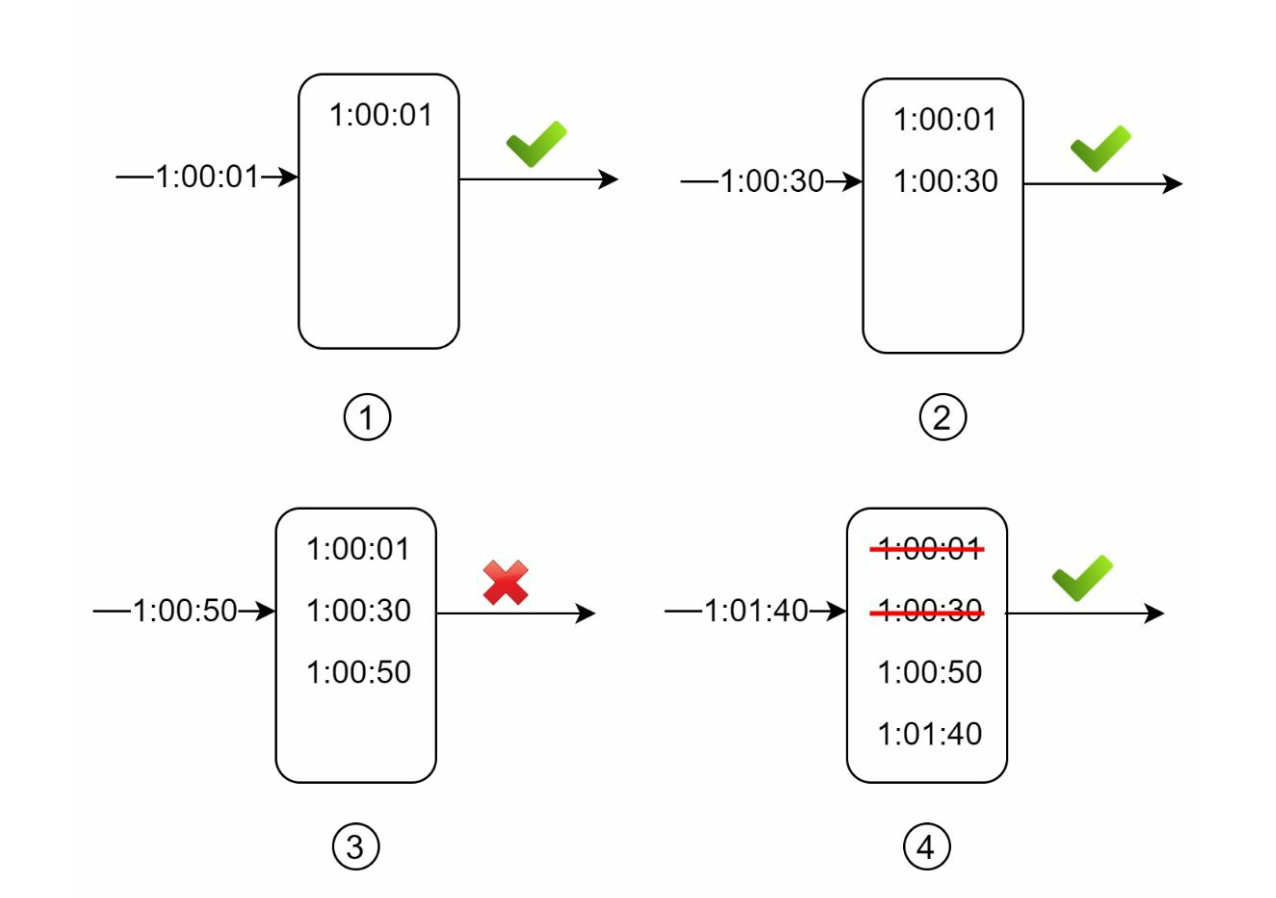

4. Sliding Window Log

- Description: Tracks timestamps to allow a rolling time window.

- Pros: Accurate rate limiting.

- Cons: High memory consumption.

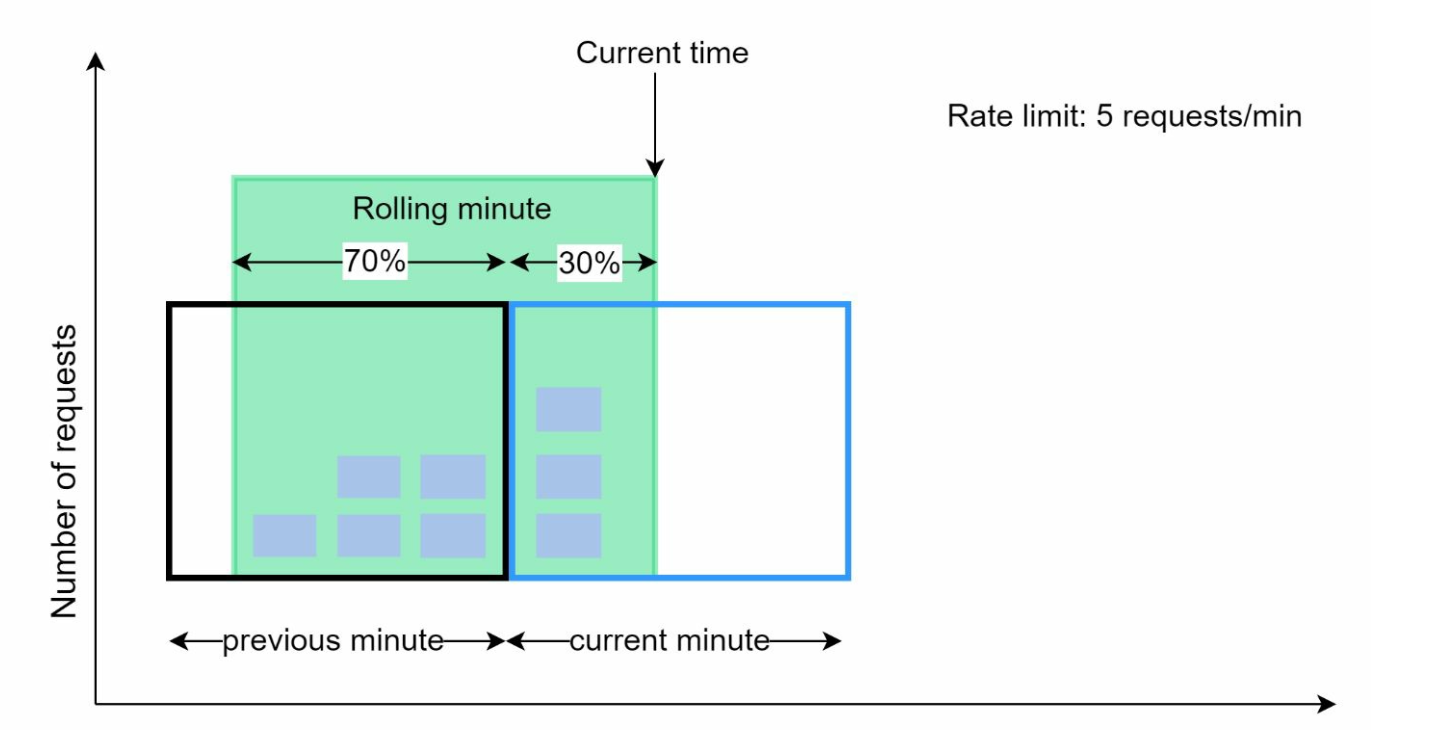

5. Sliding Window Counter

- Description: Combines fixed window and sliding log methods for smoothing spikes.

- Pros: Memory-efficient, handles traffic bursts.

- Cons: Approximation may not be perfectly strict.

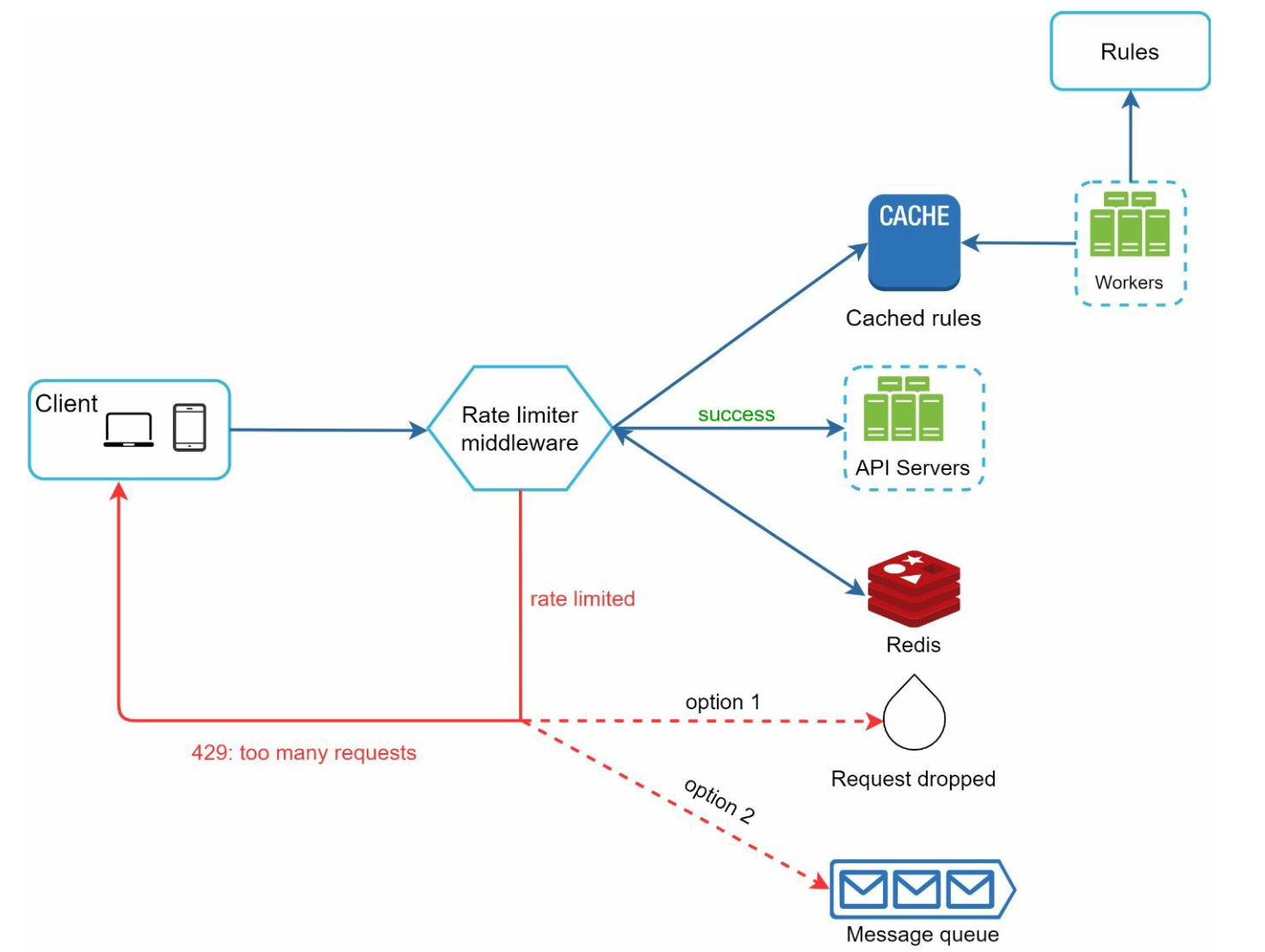

High-Level Architecture

- Data Storage: Use in-memory caching (e.g., Redis) for fast counter operations.

- Steps:

- Client sends request to middleware.

- Middleware checks counters in Redis.

- Request is processed or rejected based on limits.

Advanced Considerations

Distributed Environments

- Challenges: Race conditions, synchronization issues.

- Solutions: Use locks, Lua scripts, or sorted sets in Redis. Employ centralized data stores for synchronization.

Performance Optimizations

- Multi-data center setups for reduced latency.

- Eventual consistency models for synchronization.

Monitoring

- Regular analytics to ensure algorithm effectiveness and adjust rules as needed.