Chapters

On this page

Introduction

A news feed system displays a constantly updating list of posts (status updates, photos, videos, and links) from a user’s connections. Examples include Facebook’s news feed, Instagram’s feed, and Twitter’s timeline. This chapter explores the design of a scalable news feed system.

Step 1: Understanding the Problem

Requirements

- Platform: The system supports both web and mobile apps.

- Features:

- Users can publish posts.

- Users can view posts from friends in their news feed.

- Sorting: Feeds are sorted in reverse chronological order for simplicity.

- Scale:

- Users can have up to 5,000 friends.

- 10 million daily active users (DAU).

- Feeds may include text, images, and videos.

Step 2: High-Level Design

Overview

The design includes two main flows:

- Feed Publishing: A user publishes a post, which is written to the database and propagated to their friends’ feeds.

- News Feed Building: A user retrieves their news feed by aggregating posts from friends in reverse chronological order.

News Feed APIs

-

Feed Publishing API:

- Endpoint:

POST /v1/me/feed - Params:

content(post text) andauth_token(authentication).

- Endpoint:

-

News Feed Retrieval API:

- Endpoint:

GET /v1/me/feed - Params:

auth_token(authentication).

- Endpoint:

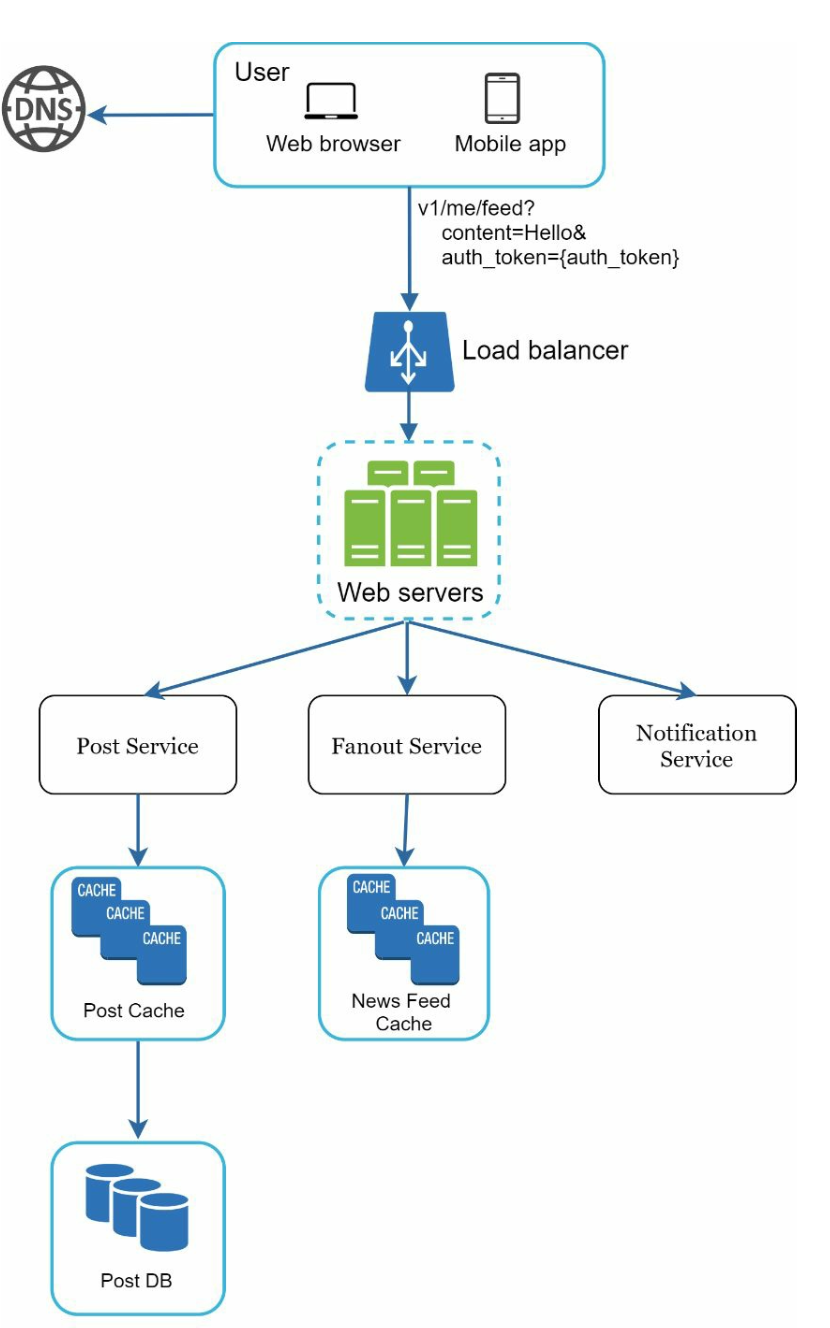

Feed Publishing

- User Interaction: The user publishes a post via the feed publishing API.

- Load Balancer: Distributes traffic to web servers.

- Web Servers: Authenticate requests and redirect to services.

- Post Service: Stores the post in the database and cache.

- Fanout Service: Propagates the post to friends’ news feeds in the cache.

- Notification Service: Sends notifications to friends.

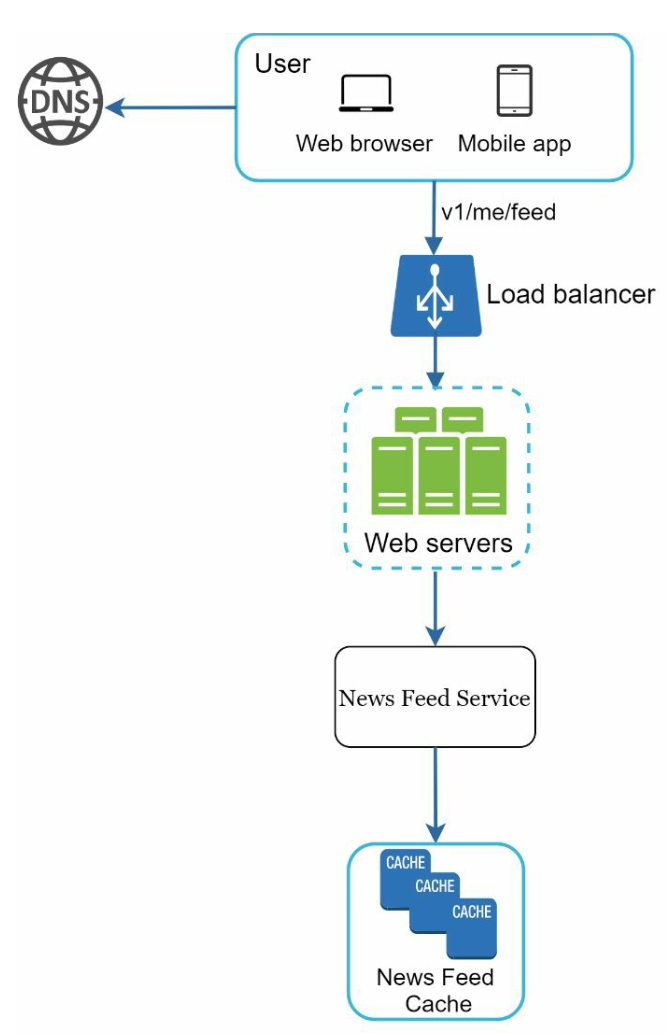

News Feed Building

- User Interaction: The user requests their news feed via the retrieval API.

- Load Balancer: Distributes traffic to web servers.

- Web Servers: Forward requests to the news feed service.

- News Feed Service: Fetches post IDs from the news feed cache and retrieves complete post details from the database or cache.

Step 3: Design Deep Dive

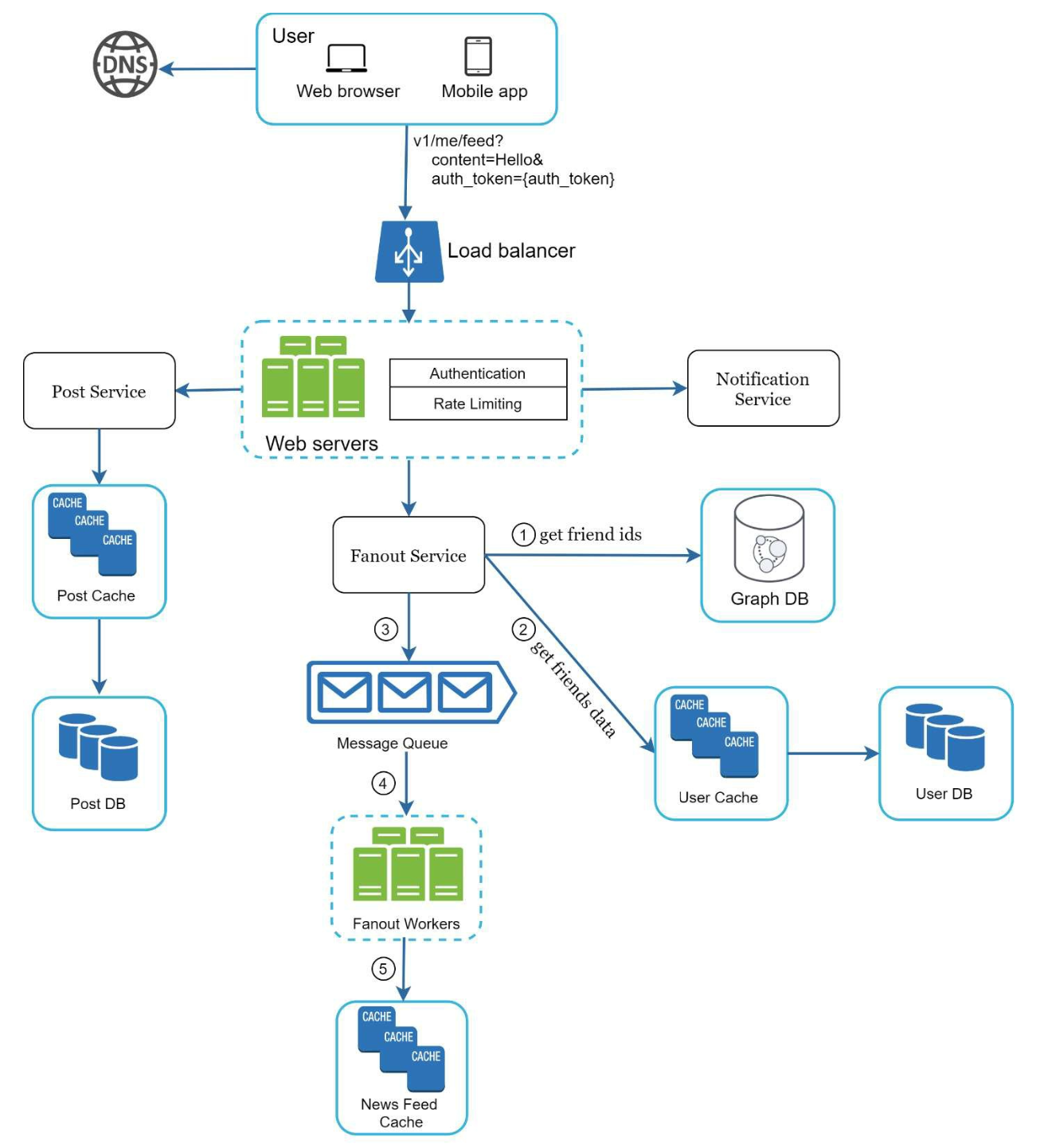

Feed Publishing Deep Dive

-

Web Servers:

- Authenticate users using

auth_token. - Enforce rate limits to prevent spam.

- Authenticate users using

-

Fanout Service:

-

Fanout on Write: Push posts to friends’ feeds at write time.

- Pros: Real-time updates, fast feed retrieval.

- Cons: Resource-intensive for users with many friends.

-

Fanout on Read: Pull posts at read time.

- Pros: Efficient for inactive users.

- Cons: Slower feed retrieval.

-

Hybrid Approach: Use a push model for most users and a pull model for high-connection users (e.g., celebrities).

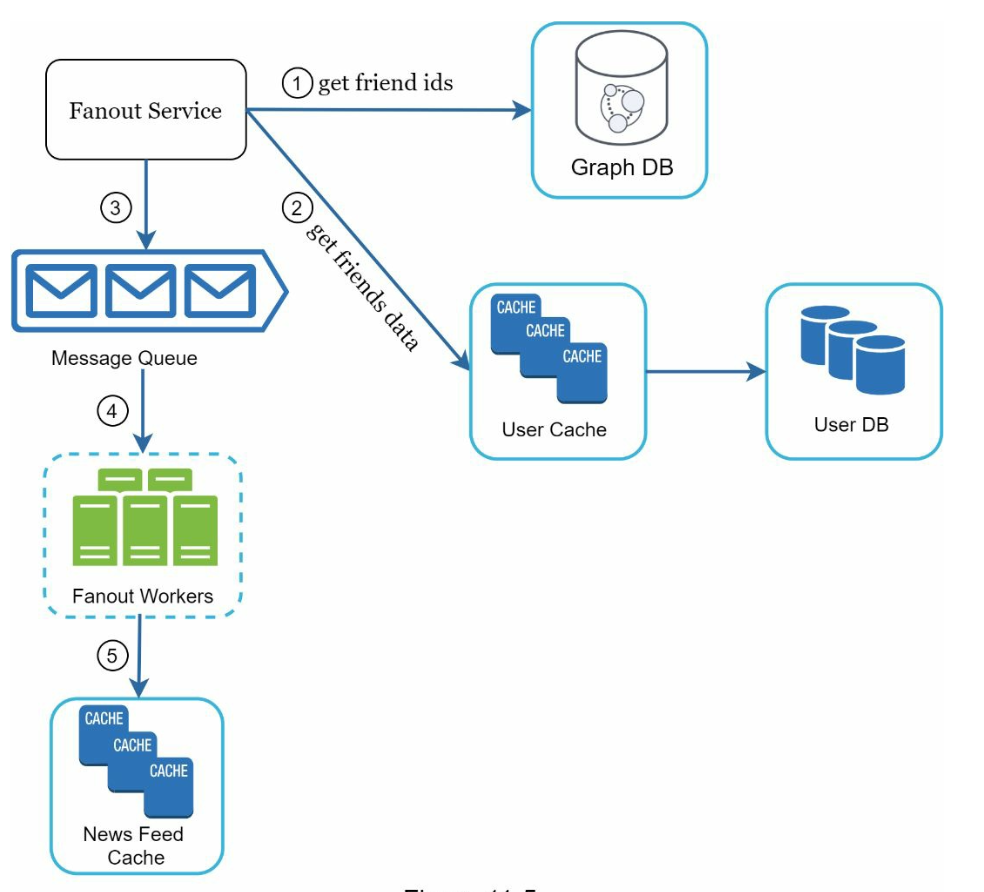

The fanout service works as following:

- Fetch Friend IDs: Retrieve the friend list from a graph database.

- Filter Friends from Cache: Access user settings in the cache to exclude certain friends (e.g., muted friends or selective sharing preferences).

- Send to Message Queue: Send the filtered friend list along with the new post ID to a message queue for processing.

- Fanout Workers: Workers retrieve data from the message queue and update the news feed cache. The cache stores

<post_id, user_id>mappings instead of full user and post objects to save memory. - Store in News Feed Cache: Append new post IDs to the friends’ news feed cache. A configurable limit ensures that only recent posts are stored, as most users focus on the latest content, keeping cache memory consumption manageable.

-

News Feed Retrieval Deep Dive

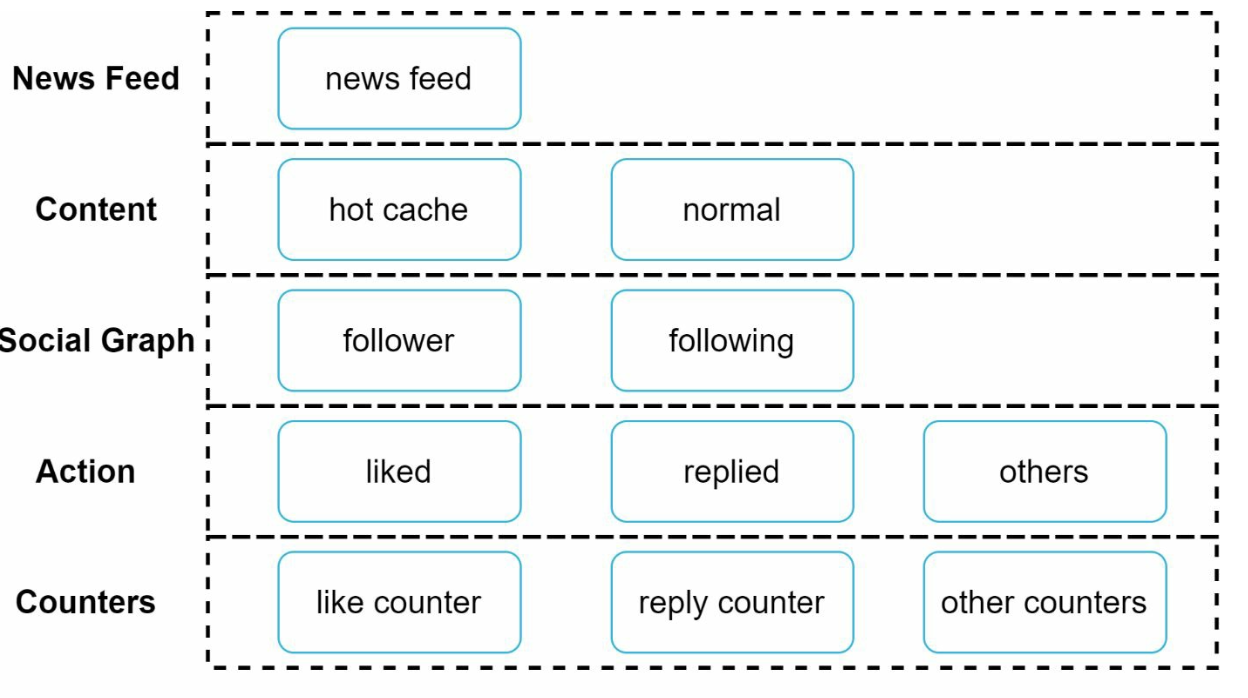

Cache Architecture

The cache is divided into five layers:

- News Feed Cache: Stores post IDs for quick retrieval.

- Content Cache: Stores post details (popular posts in hot cache).

- Social Graph Cache: Stores user relationship data.

- Action Cache: Tracks user actions (likes, replies, shares).

- Counter Cache: Maintains counts for likes, replies, followers, etc.

Key Optimizations

Scaling

- Database Scaling:

- Horizontal scaling and sharding.

- Use of read replicas for high-traffic queries.

- Stateless Web Tier: Keep web servers stateless to enable horizontal scaling.

Caching

- Store frequently accessed data in memory.

- Use cache layers to reduce latency and database load.

Reliability

- Consistent Hashing: Distribute requests evenly across servers.

- Message Queues: Decouple system components and buffer traffic.

Monitoring

- Track key metrics like QPS (queries per second) and latency.

- Monitor cache hit rates and adjust configurations accordingly.